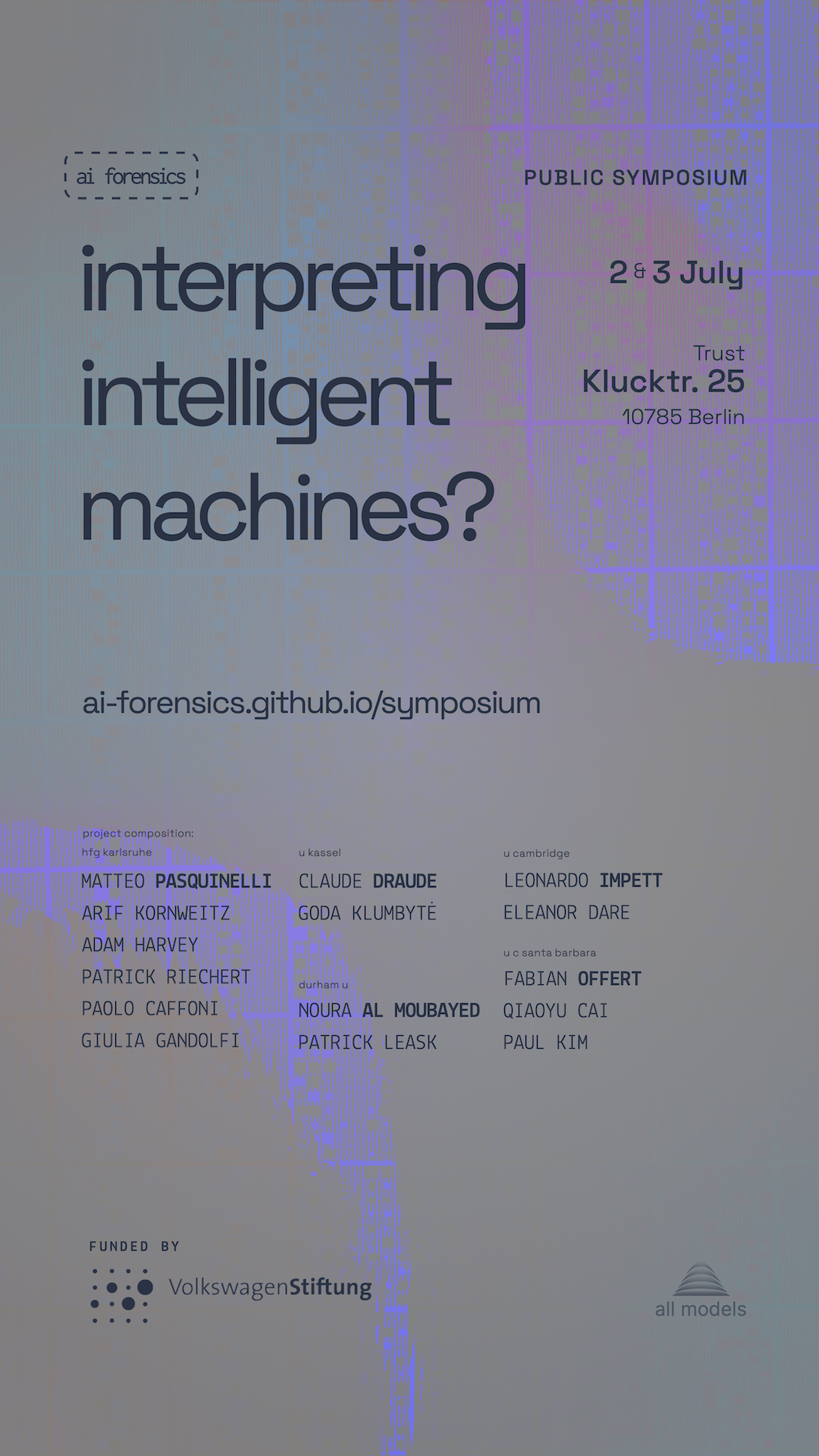

2 and 3 July 2025, the AI Forensics team held a public symposium:

Interpreting intelligent machines?

What does it mean to interpret machines? What is language to a computer? What is the epistemology determining artificial intelligence? Is there an act of interpretation common to traditional hermeneutics and the nascent attempts to reverse engineer neural algorithms? According to which historical trajectories do social relations, processes of abstraction, definitions of intelligence, and predictive models configure one another? What epistemic horizons thereby impose themselves on us, and what modes of knowing could we mobilise in renewal?

Logistical information

The symposium took place over two days—Wed., 2 July & Thu., 3 July—at Trust in Berlin. It featured a mix of lectures, panel discussions, as well as more participatory formats.

- The symposium was livestreamed.

- Selected recordings & materials to be made available.

- Scheduling changes: the programme on the current page reflects the final running order.

You can request reminders and updates per email through this form.Personal data deleted.- Add the symposium to your calendar: Day 1 Day 2

Interpreting intelligent machines pt. 1

Trust, Kluckstraße 25, 10785 Berlinproject retrospective / transformers—models of? / cartography / labour / knowledges and practices of interpretability

- Break

Arrival

10:00 Introduction

10:20talk, roundtableWhat is AI Forensics? A retrospective introduction

Lead PI Matteo Pasquinelli (HfG Karlsruhe/U Venice) opens the symposium: Why forensics?—The search for a methodology commensurate to the societal transformations wrought by AI. Exploding (the view of the) AI production pipeline. Characterising the project.

Discussion

Cartography of transformer hegemony

11:15PanelRevisiting milestones in the transformers’ ascent, these contributions offer critical reappraisals that complicate the eponymous catchphrase of attention being all you need. They put forth pieces of an ‘alternative chronology’ of these models.

The Cost of Language. Tokenization as a Metric of Labour.

Paolo Caffoni (HfG Karlsruhe) investigates machine translation, the birthplace of the transformer’s ascent

Discussion

Feminist methods for XAI and cartography as method

Goda Klumbytė (U Kassel) introduces cartography as a method Cartography—language models, labour, and the social

Discussion

- Break

Lunch

13:15 Vector epistemology

15:00PanelThe techniques and mechanisms underlying the shape of AI—and their form(s) of interpretation.

Models of vision or visual culture?

Leonardo Impett (U Cambridge/B Hertziana) presents work on OpenAI’s multimodal CLIP and previews Vector Media (forthcoming 2026, meson press), coauthored with Fabian Offert (UCSB)

Beyond SAEs for Mechanistic Interpretability

Patrick Leask (Durham U) contextualises the

sparse autoencoderapproach within the wider frame of mechanistic interpretability, discussing also the significance recent findings (2025)Discussion

What kinds of knowledge and practice is interpreting AI?

- Break

Informal

17:00

Interpreting intelligent machines? pt. 2

Trust, Kluckstraße 25, 10785 Berlinhistorical epistemology / statistics / pedagogy / arts

- Break

Arrival

11:00 Statistical normativities

11:15panelCalculating health

Giulia Gandolfi (HfG Karlsruhe) on the historical epistemology of correlational knowledge in medicine

AI interpretability and accountability in the humanitarian sector

Arif Kornweitz (HfG Karlsruhe) presents his PhD project—from the inception of prediction in political philosophy to the “hard problem of conflict prediction”

Discussion

- Break

Lunch

13:15 Learning otherwise

15:00panelWorlding beyond benchmarking

Eleanor Dare (U Cambridge) discusses worlding and arts-based research in the context of a series of pedagogical workshops on AI held in Cambridge, England

Affective & embodied knowledge in/for XAI

Goda Klumbytė (U Kassel) on the need for new modes of knowing, and reporting from the Beyond Explainability workshop

Discussion

Announcements: Outputs (forthcoming)

16:45Two books, a workshop, and an anthology of boundary concepts—what the remainder of the project has in storepredictions